Drill-down

Think of AI agents like kitchen appliances. Within the family of "blenders" there are Nutribullets, Vitamixes, and Magic Bullets. They're all blenders, but you'd choose differently depending on what you're making. AI works the same way: a family of underlying kinds, each with specific products.

Language models (LLMs). The workhorse family. They process and generate text. Underneath ChatGPT is a model called GPT (made by OpenAI). Underneath Claude is Claude (Anthropic). Underneath Gemini is Gemini (Google). The product is the shop window; the model is what's behind the counter. Products: ChatGPT, Claude, Gemini, Perplexity, Copilot. Use for: thinking, writing, summarising, explaining, deciding. If in doubt, start here.

Image models. A family (mostly built on a technique called diffusion) that produces images from text descriptions or reference pictures. Products: Midjourney, Flux, Google Stitch, Ideogram, Adobe Firefly. Use for: illustrations, logos, concept art, Christmas cards.

Video models. A newer family that produces short clips, typically 5–10 seconds long. Products: Kling, Runway, Luma, Sora, Veo. Use for: social content, short montages. Not yet great for long-form.

Voice and audio models. Three flavours: transcription (Whisper), voice generation (ElevenLabs), music generation (Suno, Udio).

Decision-making systems (reinforcement learning). A quieter family that learns by trial and error rather than by reading text. Famously: AlphaGo. Also used in robotics, self-driving cars, and game-playing AI. You're unlikely to use one directly, but worth knowing exists.

Scientific models. Trained to respect specific laws, not just spot patterns in data. Example: PINNs (Physics-Informed Neural Networks), used in fluid dynamics, engineering simulations, climate modelling. Not consumer-facing, but you'll hear more as industries build their own.

Rule-based systems. The oldest family. No learning, just explicit logic. Expert systems, spam filters, the decision tree in your car's fault diagnostics. Technically still AI, and in the right context, still the right answer.

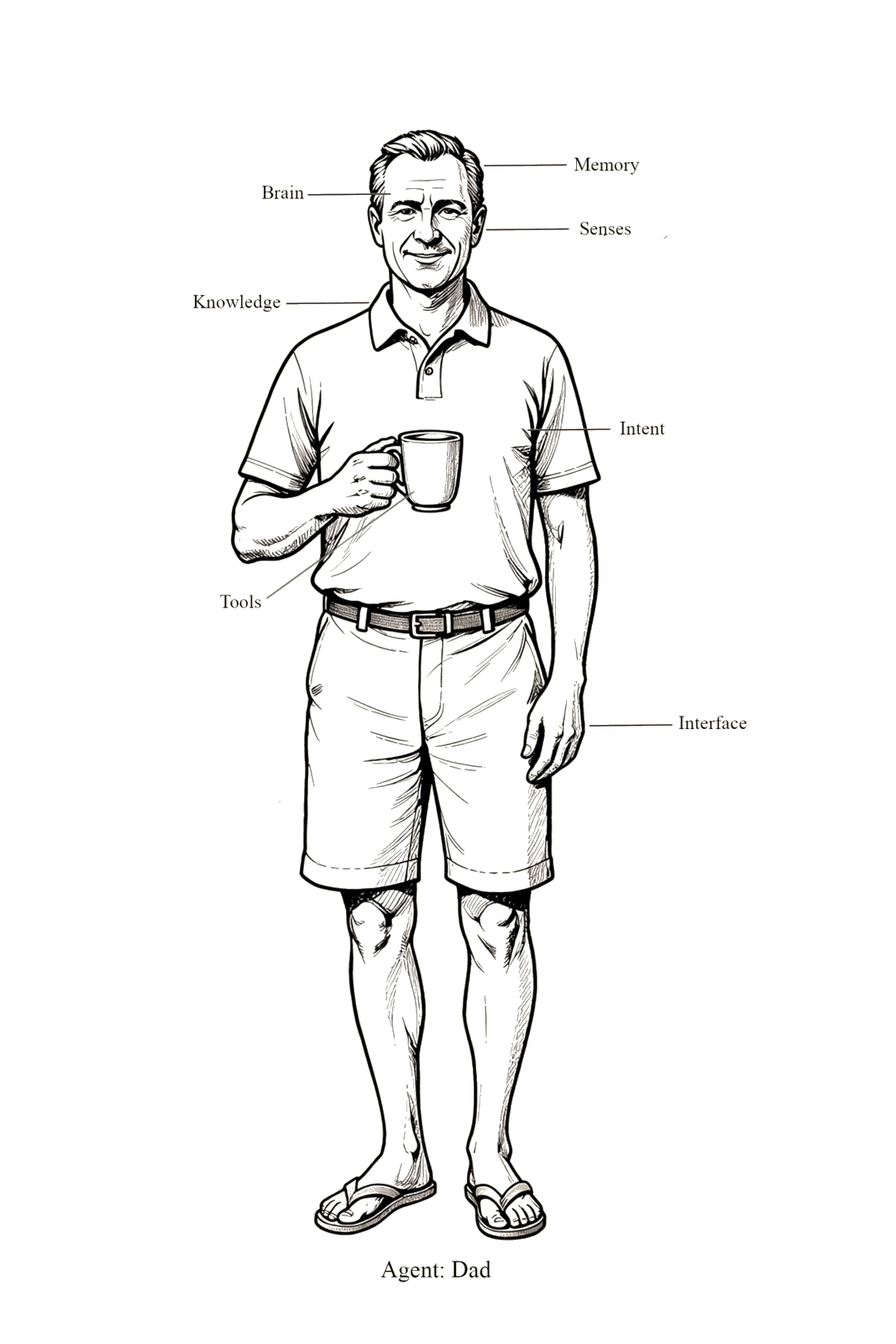

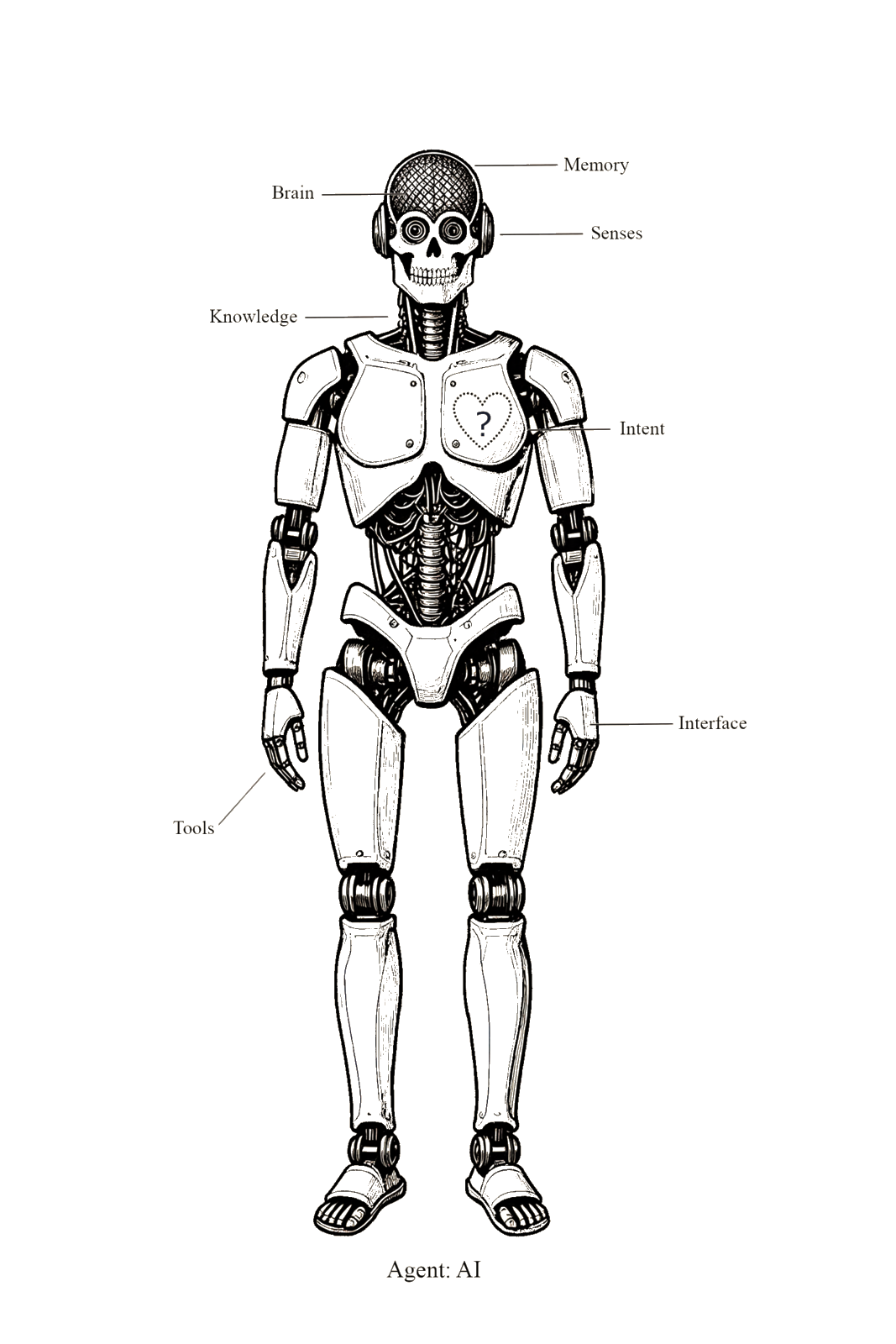

Model, product, agent: three things often confused

- A model is the underlying AI engine. Think GPT-4, Claude Sonnet, Midjourney v7. You don't usually interact with models directly.

- A product is the branded app wrapping a model. ChatGPT, Claude.ai, the Midjourney Discord bot. This is what most people mean when they say "AI."

- An agent is a model (or product) given a role, knowledge, tools, and a goal, and let loose to pursue it semi-autonomously. Agents are what Keith's setup uses. A chat window is not an agent; an agent is a chat window with intent, memory, and hands.

This manual uses "Agent" as the friendly shorthand for "the thing doing the work," because the word has caught on generally. But when someone in the group says "I built an agent," they probably mean the third thing, not the first two.

Persona files: giving each agent a "who am I"

Every serious agent carries a persistent set of instructions defining who it is, what it does, what it doesn't do, and how it writes. Different tools and frameworks call this by different names, but it's the same idea:

CLAUDE.md: Claude Code projectssoul.md: Hermes and other character-first frameworksAGENTS.md: emerging convention in several open-source stacks.cursorrules: the Cursor IDE.clinerules / .windsurfrules: Cline, Windsurf, similar tools- Custom Instructions: the field in ChatGPT Projects and Claude Projects

- System prompt: the generic name that sits underneath all of the above

The file (or field) is where you write things like "You are a research analyst. Respond concisely. Never invent citations. Flag uncertainty explicitly." A 200-word persona file well done is the difference between a competent agent and a generic assistant. Done badly or not at all, the agent defaults to a vaguely helpful, verbose, uncertain non-specialist.

Foundation models and where this is heading. The newest concept in this space is foundation model: a single large model trained so broadly that it can be adapted to many tasks (text, images, audio, reasoning) rather than specialised for one. GPT-4, Gemini, Claude are all foundation models now. The taxonomy above is still useful, but expect the families to bleed into each other as one model learns to handle everything.